不多说,直接上干货!

问题详情

问题排查

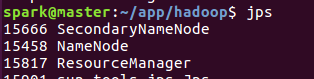

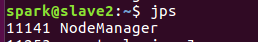

spark@master:~/app/hadoop$ sbin/start-all.sh This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh Starting namenodes on [master] master: starting namenode, logging to /home/spark/app/hadoop-2.7.3/logs/hadoop-spark-namenode-master.out slave1: starting datanode, logging to /home/spark/app/hadoop-2.7.3/logs/hadoop-spark-datanode-slave1.out slave2: starting datanode, logging to /home/spark/app/hadoop-2.7.3/logs/hadoop-spark-datanode-slave2.out Starting secondary namenodes [master] master: starting secondarynamenode, logging to /home/spark/app/hadoop-2.7.3/logs/hadoop-spark-secondarynamenode-master.out starting yarn daemons starting resourcemanager, logging to /home/spark/app/hadoop-2.7.3/logs/yarn-spark-resourcemanager-master.out slave2: starting nodemanager, logging to /home/spark/app/hadoop-2.7.3/logs/yarn-spark-nodemanager-slave2.out slave1: starting nodemanager, logging to /home/spark/app/hadoop-2.7.3/logs/yarn-spark-nodemanager-slave1.out spark@master:~/app/hadoop$ jps 15666 SecondaryNameNode 15458 NameNode 15817 ResourceManager 15901 sun.tools.jps.Jps spark@master:~/app/hadoop$

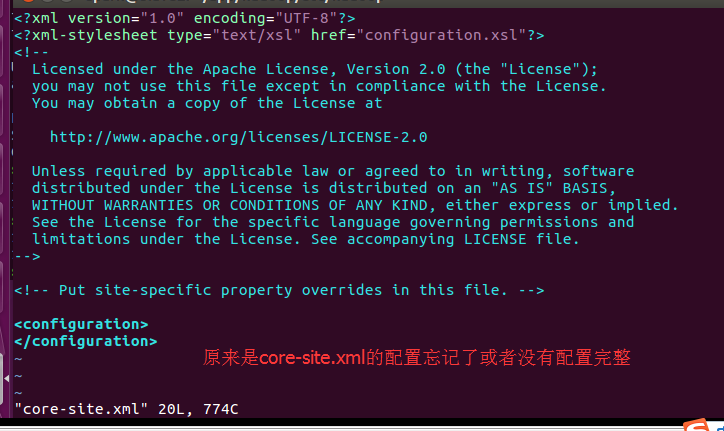

解决办法

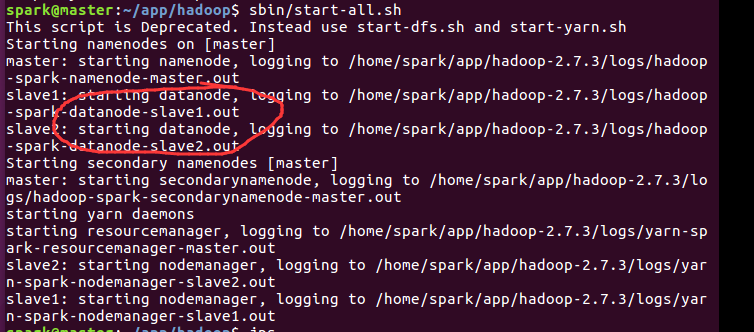

spark@slave1:~/app/hadoop-2.7.3/logs$ cat hadoop-spark-datanode-slave1.log 2017-06-07 00:33:19,588 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting DataNode STARTUP_MSG: host = slave1/192.168.80.146 STARTUP_MSG: args = [] STARTUP_MSG: version = 2.7.3 STARTUP_MSG: classpath = /home/spark/app/hadoop-2.7.3/etc/hadoop:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-io-2.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-net-3.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jersey-server-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-configuration-1.6.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/hamcrest-core-1.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-digester-1.8.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/guava-11.0.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/gson-2.2.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/netty-3.6.2.Final.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/servlet-api-2.5.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/hadoop-auth-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jsp-api-2.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/httpclient-4.2.5.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-collections-3.2.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/xmlenc-0.52.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jersey-core-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/curator-client-2.7.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jsch-0.1.42.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/httpcore-4.2.5.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-cli-1.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-lang-2.6.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jets3t-0.9.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/activation-1.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/xz-1.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-logging-1.1.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/paranamer-2.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-compress-1.4.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/curator-framework-2.7.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/asm-3.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/junit-4.11.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jersey-json-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-math3-3.1.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/avro-1.7.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/zookeeper-3.4.6.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-httpclient-3.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/stax-api-1.0-2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jetty-6.1.26.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/commons-codec-1.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jettison-1.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/hadoop-annotations-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jetty-util-6.1.26.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/mockito-all-1.8.5.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/log4j-1.2.17.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/lib/jsr305-3.0.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3-tests.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/common/hadoop-nfs-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-io-2.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/guava-11.0.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/asm-3.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3-tests.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/commons-io-2.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-server-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/guava-11.0.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/servlet-api-2.5.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/commons-collections-3.2.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-core-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/commons-cli-1.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/commons-lang-2.6.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/activation-1.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/xz-1.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/aopalliance-1.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/asm-3.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-json-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/javax.inject-1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-client-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-6.1.26.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/commons-codec-1.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jettison-1.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/guice-3.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/log4j-1.2.17.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-client-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-common-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-api-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-common-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-registry-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/xz-1.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/asm-3.2.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/junit-4.11.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/javax.inject-1.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-3.0.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3-tests.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.3.jar:/home/spark/app/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar:/contrib/capacity-scheduler/*.jar:/contrib/capacity-scheduler/*.jar:/contrib/capacity-scheduler/*.jar STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r baa91f7c6bc9cb92be5982de4719c1c8af91ccff; compiled by 'root' on 2016-08-18T01:41Z STARTUP_MSG: java = 1.8.0_60 ************************************************************/ 2017-06-07 00:33:19,697 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: registered UNIX signal handlers for [TERM, HUP, INT] 2017-06-07 00:33:24,628 INFO org.apache.hadoop.metrics2.impl.MetricsConfig: loaded properties from hadoop-metrics2.properties 2017-06-07 00:33:25,153 INFO org.apache.hadoop.metrics2.impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s). 2017-06-07 00:33:25,153 INFO org.apache.hadoop.metrics2.impl.MetricsSystemImpl: DataNode metrics system started 2017-06-07 00:33:25,174 INFO org.apache.hadoop.hdfs.server.datanode.BlockScanner: Initialized block scanner with targetBytesPerSec 1048576 2017-06-07 00:33:25,198 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Configured hostname is slave1 2017-06-07 00:33:25,251 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Starting DataNode with maxLockedMemory = 0 2017-06-07 00:33:25,452 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Opened streaming server at /0.0.0.0:50010 2017-06-07 00:33:25,463 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Balancing bandwith is 1048576 bytes/s 2017-06-07 00:33:25,463 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Number threads for balancing is 5 2017-06-07 00:33:26,287 INFO org.mortbay.log: Logging to org.slf4j.impl.Log4jLoggerAdapter(org.mortbay.log) via org.mortbay.log.Slf4jLog 2017-06-07 00:33:26,321 INFO org.apache.hadoop.security.authentication.server.AuthenticationFilter: Unable to initialize FileSignerSecretProvider, falling back to use random secrets. 2017-06-07 00:33:26,379 INFO org.apache.hadoop.http.HttpRequestLog: Http request log for http.requests.datanode is not defined 2017-06-07 00:33:26,390 INFO org.apache.hadoop.http.HttpServer2: Added global filter 'safety' (class=org.apache.hadoop.http.HttpServer2$QuotingInputFilter) 2017-06-07 00:33:26,395 INFO org.apache.hadoop.http.HttpServer2: Added filter static_user_filter (class=org.apache.hadoop.http.lib.StaticUserWebFilter$StaticUserFilter) to context datanode 2017-06-07 00:33:26,396 INFO org.apache.hadoop.http.HttpServer2: Added filter static_user_filter (class=org.apache.hadoop.http.lib.StaticUserWebFilter$StaticUserFilter) to context static 2017-06-07 00:33:26,397 INFO org.apache.hadoop.http.HttpServer2: Added filter static_user_filter (class=org.apache.hadoop.http.lib.StaticUserWebFilter$StaticUserFilter) to context logs 2017-06-07 00:33:26,451 INFO org.apache.hadoop.http.HttpServer2: Jetty bound to port 40839 2017-06-07 00:33:26,451 INFO org.mortbay.log: jetty-6.1.26 2017-06-07 00:33:27,485 INFO org.mortbay.log: Started HttpServer2$SelectChannelConnectorWithSafeStartup@localhost:40839 2017-06-07 00:33:28,112 INFO org.apache.hadoop.hdfs.server.datanode.web.DatanodeHttpServer: Listening HTTP traffic on /0.0.0.0:50075 2017-06-07 00:33:29,102 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: dnUserName = spark 2017-06-07 00:33:29,102 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: supergroup = supergroup 2017-06-07 00:33:29,730 INFO org.apache.hadoop.ipc.CallQueueManager: Using callQueue class java.util.concurrent.LinkedBlockingQueue 2017-06-07 00:33:29,838 INFO org.apache.hadoop.ipc.Server: Starting Socket Reader #1 for port 50020 2017-06-07 00:33:30,208 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Opened IPC server at /0.0.0.0:50020 2017-06-07 00:33:30,274 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Refresh request received for nameservices: null 2017-06-07 00:33:30,432 INFO org.mortbay.log: Stopped HttpServer2$SelectChannelConnectorWithSafeStartup@localhost:0 2017-06-07 00:33:30,434 INFO org.apache.hadoop.ipc.Server: Stopping server on 50020 2017-06-07 00:33:30,435 INFO org.apache.hadoop.metrics2.impl.MetricsSystemImpl: Stopping DataNode metrics system... 2017-06-07 00:33:30,436 INFO org.apache.hadoop.metrics2.impl.MetricsSystemImpl: DataNode metrics system stopped. 2017-06-07 00:33:30,436 INFO org.apache.hadoop.metrics2.impl.MetricsSystemImpl: DataNode metrics system shutdown complete. 2017-06-07 00:33:30,446 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: Shutdown complete. 2017-06-07 00:33:30,448 FATAL org.apache.hadoop.hdfs.server.datanode.DataNode: Exception in secureMain java.io.IOException: Incorrect configuration: namenode address dfs.namenode.servicerpc-address or dfs.namenode.rpc-address is not configured. at org.apache.hadoop.hdfs.DFSUtil.getNNServiceRpcAddressesForCluster(DFSUtil.java:875) at org.apache.hadoop.hdfs.server.datanode.BlockPoolManager.refreshNamenodes(BlockPoolManager.java:155) at org.apache.hadoop.hdfs.server.datanode.DataNode.startDataNode(DataNode.java:1129) at org.apache.hadoop.hdfs.server.datanode.DataNode.<init>(DataNode.java:429) at org.apache.hadoop.hdfs.server.datanode.DataNode.makeInstance(DataNode.java:2374) at org.apache.hadoop.hdfs.server.datanode.DataNode.instantiateDataNode(DataNode.java:2261) at org.apache.hadoop.hdfs.server.datanode.DataNode.createDataNode(DataNode.java:2308) at org.apache.hadoop.hdfs.server.datanode.DataNode.secureMain(DataNode.java:2485) at org.apache.hadoop.hdfs.server.datanode.DataNode.main(DataNode.java:2509) 2017-06-07 00:33:30,488 INFO org.apache.hadoop.util.ExitUtil: Exiting with status 1 2017-06-07 00:33:30,499 INFO org.apache.hadoop.hdfs.server.datanode.DataNode: SHUTDOWN_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down DataNode at slave1/192.168.80.146 ************************************************************/

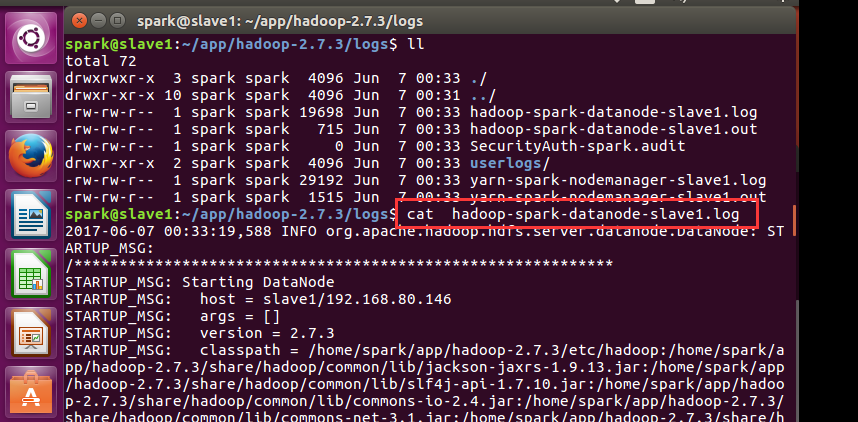

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/local/hadoop/hadoop-2.6.0/tmp</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.groups</name>

<value>*</value>

</property>

</configuration>

成功!