社区微信群开通啦,扫一扫抢先加入社区官方微信群

社区微信群

社区微信群开通啦,扫一扫抢先加入社区官方微信群

社区微信群

yum -y install yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

yum -y install docker-ce

systemctl enable docker

systemctl start docker

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

EOF

yum -y install epel-release

yum clean all

yum makecache

yum -y install kubelet kubeadm kubectl kubernetes-cni

systemctl enable kubelet && systemctl start kubelet

docker pull mirrorgooglecontainers/kube-apiserver:v1.13.4

docker pull mirrorgooglecontainers/kube-controller-manager:v1.13.4

docker pull mirrorgooglecontainers/kube-scheduler:v1.13.4

docker pull mirrorgooglecontainers/kube-proxy:v1.13.4

docker pull mirrorgooglecontainers/pause:3.1

docker pull mirrorgooglecontainers/etcd:3.2.24

docker pull coredns/coredns:1.2.6

docker tag mirrorgooglecontainers/kube-apiserver:v1.13.4 k8s.gcr.io/kube-apiserver:v1.13.4

docker tag mirrorgooglecontainers/kube-controller-manager:v1.13.4 k8s.gcr.io/kube-controller-manager:v1.13.4

docker tag mirrorgooglecontainers/kube-scheduler:v1.13.4 k8s.gcr.io/kube-scheduler:v1.13.4

docker tag mirrorgooglecontainers/kube-proxy:v1.13.4 k8s.gcr.io/kube-proxy:v1.13.4

docker tag mirrorgooglecontainers/pause:3.1 k8s.gcr.io/pause:3.1

docker tag mirrorgooglecontainers/etcd:3.2.24 k8s.gcr.io/etcd:3.2.24

docker tag docker.io/coredns/coredns:1.2.6 k8s.gcr.io/coredns:1.2.6

• 临时禁用

sudo swapoff -a

• 永久禁用

打开/etc/fstab注释掉swap那一行。

• 临时禁用selinux

setenforce 0

• 永久关闭

修改/etc/sysconfig/selinux文件设置

sed -i 's/SELINUX=permissive/SELINUX=disabled/' /etc/sysconfig/selinux

cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.conf.all.forwarding = 1

vm.swappiness = 0

EOF

sysctl --system

sysctl -p /etc/sysctl.d/k8s.conf

kubeadm init

• 临时生效

export KUBECONFIG=/etc/kubernetes/admin.conf

• 永久生效

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

或

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source ~/.bash_profile #这步很关键,不然kubectl命令会提示can’t connection

kubectl apply -f https://git.io/weave-kube-1.6

参考网址:https://juejin.im/post/5c36fd906fb9a049f8197c9b?tdsourcetag=s_pctim_aiomsg

curl -O https://storage.googleapis.com/kubernetes-helm/helm-v2.13.1-linux-amd64.tar.gz

tar -zxvf helm-v2.13.1-linux-amd64.tar.gz

mv linux-amd64/helm /usr/local/bin

helm help验证是否安装成功。export PATH=/usr/local/bin:$PATH添加环境变量即可(该方法只是临时添加),重启后失效。在进行下列操作前,要先获得root用户权限,使用sudo -i获得root用户权限,不然终端会报“The connection to the server localhost:8080 was refused”。在root用户下,需添加环境变量export PATH=/usr/local/bin:$PATH,否则会终端会提示无helm命令。

kubectl --namespace kube-system create serviceaccount tiller

kubectl create clusterrolebinding tiller --clusterrole cluster-admin --serviceaccount=kube-system:tiller

helm init --service-account tiller

kubectl patch对tiller做如下配置kubectl patch deployment tiller-deploy --namespace=kube-system --type=json --patch='[{"op": "add", "path": "/spec/template/spec/containers/0/command", "value": ["/tiller", "--listen=localhost:44134"]}]'

helm version

终端同时显示client和server端的version信息且匹配,则安装成功。

注意:

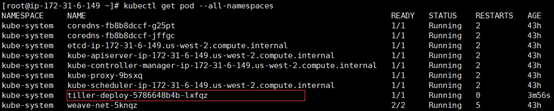

在第五步验证时候,终端提示“could not find a ready tiller pod”,代表tiller的pod已经存在,但是没有运行起来。使用kubectl get pod --all-namespaces找到tiller对应的pod。

然后使用kubectl describe pod + pod名(上图红色矩形框中的名字)查看错误描述,可以看到” 1 node(s) had taints that the pod didn’t tolerate”,这是因为kubernetes出于安全考虑默认情况下无法在master节点上部署pod,使用如下命令解决:kubectl taint nodes --all node-role.kubernetes.io/master-

参考网址:

https://zero-to-jupyterhub.readthedocs.io/en/latest/setup-helm.html

https://github.com/helm/helm/blob/master/docs/install.md

https://www.hi-linux.com/posts/21466.html

https://my.oschina.net/eima/blog/1860598

openssl rand -hex 32

touch config.yaml

vi config.yaml添加以下内容:proxy:

secretToken: "<RANDOM_HEX>"

其中<RANDOM_HEX>表示第一步中随机生成的字符串

注意:secretToken: "<RANDOM_HEX>"中冒号后有一个空格,缺少空格则后续操作无法进行。

wq保存并退出helm repo add jupyterhub https://jupyterhub.github.io/helm-chart/

helm repo update

RELEASE=jhub

NAMESPACE=jhub

helm upgrade --install $RELEASE jupyterhub/jupyterhub

--namespace $NAMESPACE

--version=0.8.0

--values config.yaml

kubectl get service --namespace jhub命令找到proxy-public对应的端口号主机ip:端口号访问并使用jupyterhub。注意:

proxy-public如果处于pending状态,是因为svc暴露给外网的方式为LoadBalancer,又没有配转发,所以获取不到ip;修改svc中的type为NodePort,通过使用本机ip+端口的方式暴露给外网。使用如下命令:kubectl -n jhub get svc

kubectl -n jhub edit svc proxy-public

找到type字段将LoadBalancer修改为NodePort。

kubectl describe pod + pod名,如果报错“pod has unbound immediate PersistentVolumeClaims”,则修改helm安装jupyterhub时的config.yaml文件,添加以下内容: hub:

db:

type: sqlite-memory

singleuser:

storage:

type: none

然后重新执行以下命令:

RELEASE=jhub

NAMESPACE=jhub

helm upgrade --install $RELEASE jupyterhub/jupyterhub

--namespace $NAMESPACE

--version=0.8.0

--values config.yaml

如果觉得我的文章对您有用,请随意打赏。你的支持将鼓励我继续创作!