社区微信群开通啦,扫一扫抢先加入社区官方微信群

社区微信群

社区微信群开通啦,扫一扫抢先加入社区官方微信群

社区微信群

声明:

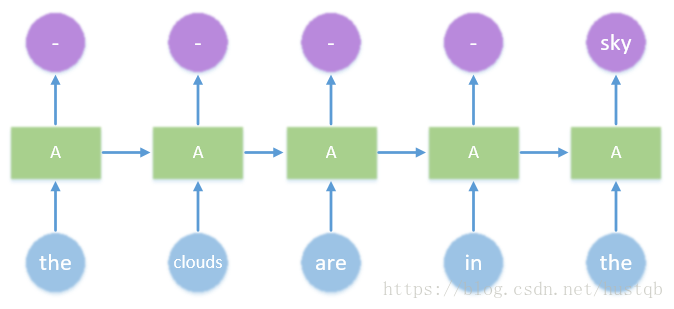

特点:可以保留过去的一部分信息,进而对当前的预测产生影响。

上图中,输入时间序列“the clouds are in the”,RNN预测下一步的单词为“sky”。

每一个模块(除第一个外),不仅受当前输入的影响,还有来自过去的影响。如此将过去的信息一点一点传递到最后。

最后预测“sky”,是对整个句子“the clouds are in the”推理的结果。

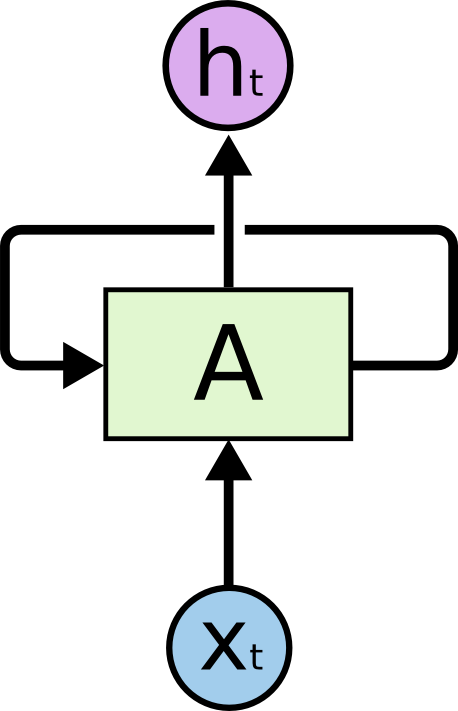

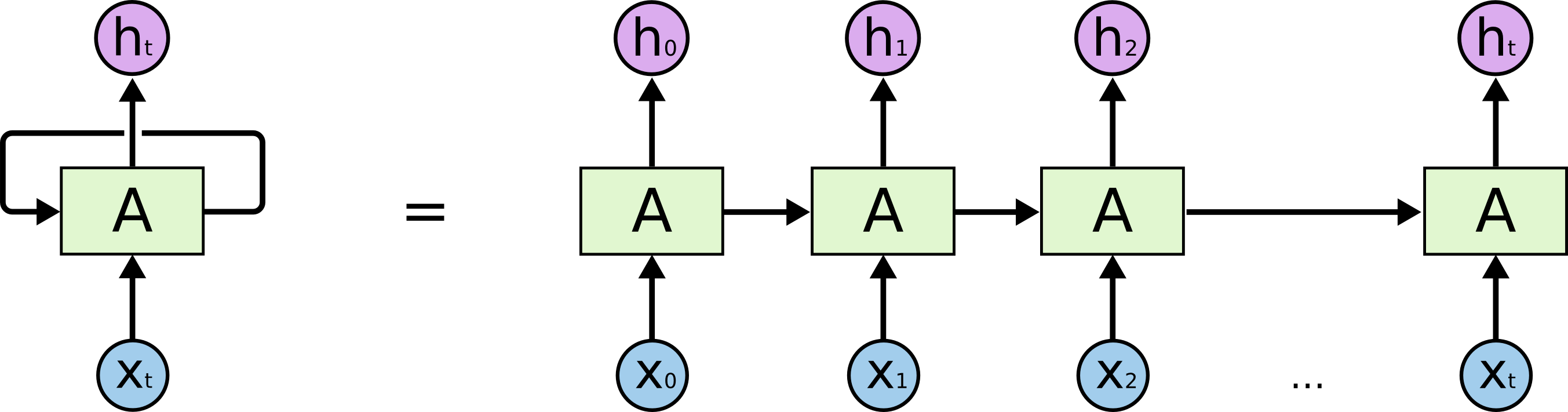

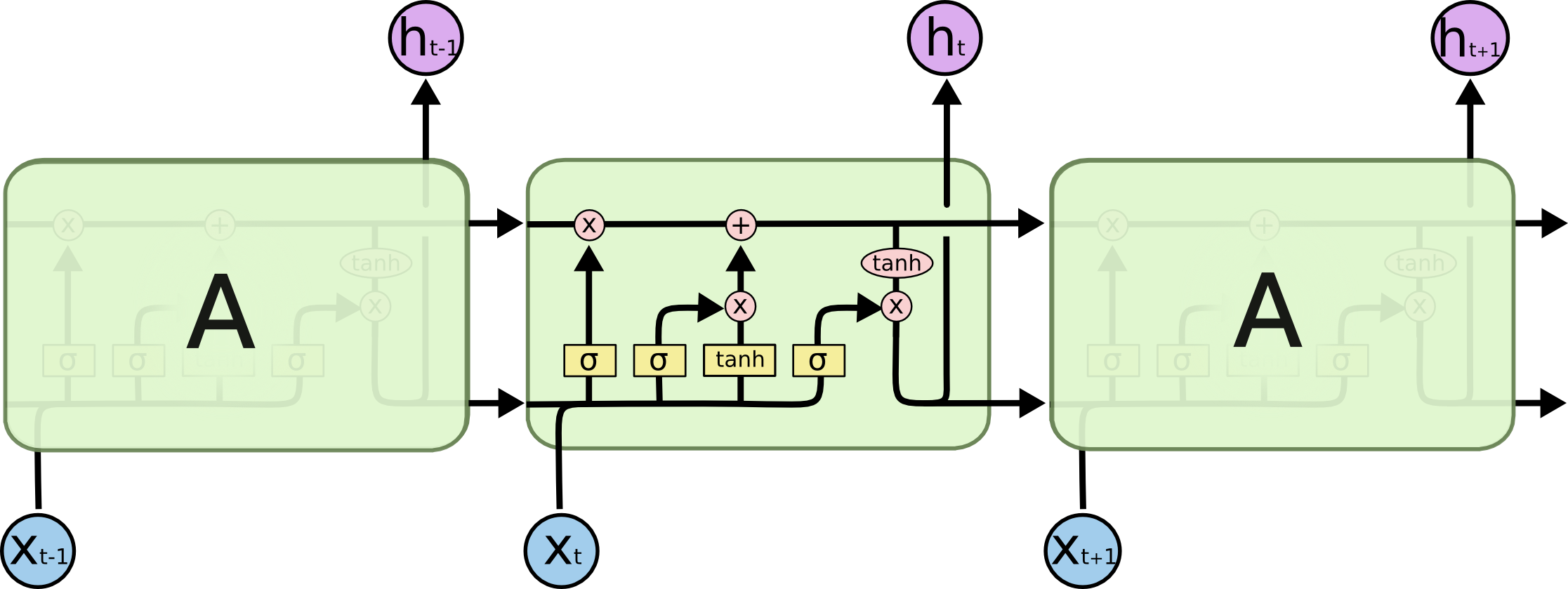

xt => t时刻的输入A => 隐藏层,可以想象成一个带循环结构的全连接层ht => t时刻的输出

fig2是将fig1按时间顺序展开的结果。fig2中每一个A的结构和参数都是相同的。

在fig2中,时刻0-t的输入将同时被处理,这也是为了方便back propagation。

问题:不能保存多个时刻以前的信息。

前面输入”the clouds are in the”,预测”sky”,RNN可以办到。但是对于长句子,如”I grew up in France… I speak fluent French”,预测最后的单词”French”对RNN来说太难了,因为RNN对信息的保留会随时间步的推移不断减弱。

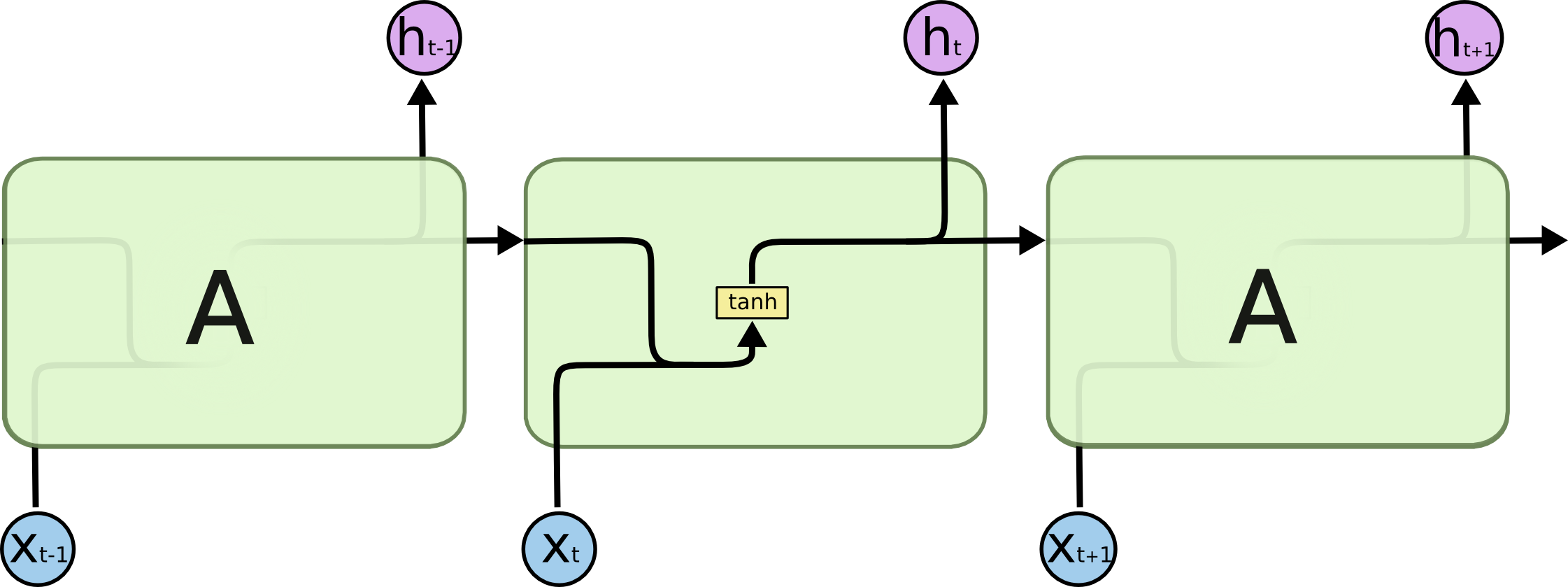

LSTM可以保留长期信息。

全称:Long Short Term Memory network。这是RNN的改进版。

特点:内部有多个门控单元用于高效保留过去信息。

推荐阅读博客Understanding LSTM Networks或其他的中文翻译版。

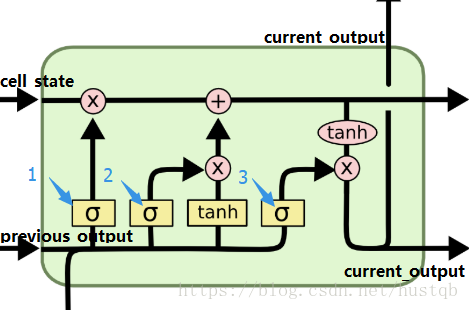

了解上图所示3个门控单元就能了解整个cell内部结构,1、2、3分别表示遗忘门、输入门和输出门。

question => 为什么把sigmoid函数

原因一:它的取值区间是(0, 1);原因二:

一个cell的工作包括:

- cell_state的筛选

- cell_state的添加

- cell_state的输出

所有的这些工作都由current_input和previous_output空值。

TensorFlow中提供了多个Cell 类:BasicRNNCell, BasicLSTMCell, GRUCell, LSTMCell和LayerNormBasicLSTMCell,所有的这些都继承自抽象类tf.contrib.rnn.RNNCell。

一个简单的TensorFlow RNN伪代码:

# define a RNNCell

cell = LSTM(num_units)

# inputs

inputs = [... a list of data...]

# define initial state for the RNNCell

state = initial_state

# define a container for outputs

result = []

# for every timestep, get their output and state

for input in inputs:

# update the state

# get output

output, state = cell(input, state)

result.append(output)

# return

return resultRNNCell中比较重要的部分:

output, state = cell(input, state)BasicRNNCell结构简单,其核心代码如下:

output = self._activation(self._linear([inputs, state]))

return output, output

self._activation是激活函数,一般是tanh。self._linear是w⋅x+b 。

returnoutput, output,cell_state和current_output相同

__init__(

num_units, # 隐藏层unit数目

activation=None,

reuse=None,

name=None

)__call__(

inputs, # 当前输入,[batch_size, input_size]

state, # 当前状态, [batch_size, self.state_size] or ([batch_size, self.state_size[0], [batch_size, self.state_size[1])

scope=None,

*args,

**kwargs

)前面通过RNNCell和for循环实现了RNN,我们还可以用tf.nn.static_rnn一行搞定整个操作,伪代码如下:

results, state = tf.nn.static_rnn(LSTM(num_units),

inputs,

initial_state=initial_state)| 参数 | 描述 |

|---|---|

| cell | RNNCell的instance |

| inputs | 一个列表,列表中每个元素shape=[batch_size, input_size] |

| inital_state | optional, 初始化状态 |

| dytpe | optional, data type |

| sequence_length | optional, shape=[batch_size] |

| scope | variable scope |

Returns

返回一个tuple,tuple=(outputs, state)。其中,outputs是包含所有时间步输出的列表,state是最后一个时间步的cell_state。

相当于static_rnn的改进版,两者输入输出不同,效率不同。dynamic_rnn效率更高,因为它能根据每个batch中序列的长度灵活调整copy RNNCell的个数,具体参考博客static_rnn 和dynamic_rnn的区别。

# create a BasicRNNCell

rnn_cell = tf.nn.rnn_cell.BasicRNNCell(hidden_size)

# defining initial state

initial_state = rnn_cell.zero_state(batch_size, dtype=tf.float32)

# 'outputs' is a tensor of shape [batch_size, max_time, cell_state_size]

# 'state' is a tensor of shape [batch_size, cell_state_size]

outputs, state = tf.nn.dynamic_rnn(rnn_cell, input_data, initial_state=initial_state, dtype=tf.float32)# create 2 LSTMCells

rnn_layers = [tf.nn.rnn_cell.LSTMCell(size) for size in [128, 256]]

# create a RNN cell composed sequentially of a number of RNNCells

multi_rnn_cell = tf.nn.rnn_cell.MultiRNNCell(rnn_layers)

# 'outputs' is a tensor of shape [batch_size, max_time, 256]

# 'state' is a N-tuple where N is the number of LSTMCells containing a

# tf.contrib.rnn.LSTMStateTuple for each cell

outputs, state = tf.nn.dynamic_rnn(cell=multi_rnn_cell, inputs=data, dtype=tf.float32)| 参数 | 描述 |

|---|---|

| cell | RNNCell instance |

| inputs | time_major == False(default) => [batch_size, max_time, …];time_major == True => [max_time, batch_size, …] |

| sequence_length | optional,a list, [batch_size] |

| initial_state | optional,初始化状态 |

| dtype | optional,data type |

| parallel_iterations | optional,并行计算的迭代数,默认为32 |

| swap_memory | optional,交换分区容量 |

| time_major | optional,time_major == True时效率高一点 |

| scope | optional,VariableScope |

Returns

A pair(outputs, state).

outputs:time_major == False => [batch_size, max_time, cell.output_size];time_major == True => [max_time, batch_size, cell.output_size]state:cell.state_size是整数 => [batch_size, cell.state_size];其他略见《安娜卡列尼娜》文本生成——利用TensorFlow构建LSTM模型

RNN是如何学习序列的?本文从一个简单的序列生成的例子来解释,想了解更多RNN的工作原理和学习过程,见 The Unreasonable Effectiveness of Recurrent Neural Networks。

我们用RNN学习《战争与和平》,然后在生成相似的文本。

在第100轮迭代:

tyntd-iafhatawiaoihrdemot lytdws e ,tfti, astai f ogoh eoase rrranbyne 'nhthnee e

plia tklrgd t o idoe ns,smtt h ne etie h,hregtrs nigtike,aoaenns lng可以发现RNN学会了用空格分隔单词,但有时两个单词之间有两个空格,而且现在对单词和标点的学习还不好。

第300轮迭代:

"Tmont thithey" fomesscerliund

Keushey. Thom here

sheulke, anmerenith ol sivh I lalterthend Bleipile shuwy fil on aseterlome

coaniogennc Phe lism thond hon at. MeiDimorotion in ther thize."可以发现,空格学得不错,标点也还OK,似乎也学了一点断句的概念。

第500轮迭代:

we counter. He stutn co des. His stanted out one ofler that concossions and was

to gearang reay Jotrets and with fre colt otf paitt thin wall. Which das stimn RNN已经可以生成简单的单词了。

第700轮迭代:

Aftair fall unsuch that the hall for Prince Velzonski's that me of

her hearly, and behs to so arwage fiving were to it beloge, pavu say falling misfort

how, and Gogition is so overelical and ofter.第1200轮迭代:

"Kite vouch!" he repeated by her

door. "But I would be done and quarts, feeling, then, son is people...."第2000轮迭代:

"Why do what that day," replied Natasha, and wishing to himself the fact the

princess, Princess Mary was easier, fed in had oftened him.

Pierre aking his soul came to the packs and drove up his father-in-law women.总结:RNN先学习空格和单词之间的空间结构,在学习简单的单词,在学习长单词和句子。

如果觉得我的文章对您有用,请随意打赏。你的支持将鼓励我继续创作!