社区微信群开通啦,扫一扫抢先加入社区官方微信群

社区微信群

社区微信群开通啦,扫一扫抢先加入社区官方微信群

社区微信群

本文将通过训练一个文本分类模型来实现情感分析任务。其中包括torchtext的基本用法——BucketIterator和torch.nn的一些基本模型——Conv2d。

在情感分类任务中,我们的数据包括文本字符和两种情感,“pos”和“neg”。Field的参数决定了数据会被怎么处理,我们使用TEXT field来定义如何处理电影评论,用LABEL field来处理两个情感类别。

其中,TEXT field参数有tokenize=‘spacy’,这表示我们会用spaCy.tokenizer来tokenize英文句子。默认分词方法是空格。

步骤总结:

import torch

import random

from torchtext import data

SEED = 1234

torch.manual_seed(SEED)

torch.cuda.manual_seed(SEED)

torch.backends.cudnn.deterministic = True

#两个Field初始化,定义vocab和tokenization类型等数据预处理工作

TEXT = data.Field(tokenize='spacy')

LABEL = data.LabelField(dtype=torch.float)

#下载IMDB数据集,包括5W条标注电影评论数据。

from torchtext import datasets

train_data, test_data = datasets.IMDB.splits(TEXT, LABEL)

#【查看每个数据split有多少条数据】

print(f'Number of training examples: {len(train_data)}')

print(f'Number of testing examples: {len(test_data)}')

#【查看一个example】

print(vars(train_data.examples[0]))

#split()数据分割,默认训练:测试-7:3,使用split_ratio参数调整比例

train_data, valid_data = train_data.split(random_state=random.seed(SEED))

#【查看每部分数据有多少条】

print(f'Number of training examples: {len(train_data)}')

print(f'Number of validation examples: {len(valid_data)}')

print(f'Number of testing examples: {len(test_data)}')

#使用glove预训练的词向量,创建vocabulary。(将每个单词映射到一个数字)

# TEXT.build_vocab(train_data, max_size=25000)

# LABEL.build_vocab(train_data)

TEXT.build_vocab(train_data, max_size=25000, vectors="glove.6B.100d", unk_init=torch.Tensor.normal_)

LABEL.build_vocab(train_data)

#【查看vocabulary的token数、最常见词、itos(int to string)、stoi(string to int)、LABEL信息】

print(f"Unique tokens in TEXT vocabulary: {len(TEXT.vocab)}")

print(f"Unique tokens in LABEL vocabulary: {len(LABEL.vocab)}")

print(TEXT.vocab.freqs.most_common(20))

print(TEXT.vocab.itos[:10])

print(LABEL.vocab.stoi)

#使用BucketIterator构建iterator来生成batch(note:<pad>应该在输入数据中消除。

BATCH_SIZE = 64

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

train_iterator, valid_iterator, test_iterator = data.BucketIterator.splits(

(train_data, valid_data, test_data),

batch_size=BATCH_SIZE,

device=device)

参考:

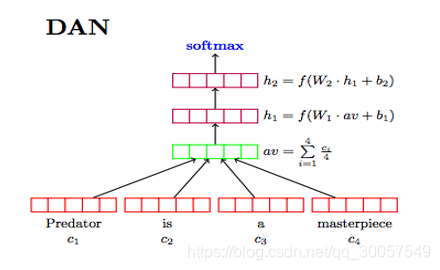

从简单到复杂模型,依次构建Word Averaging模型、RNN/LSTM模型、CNN模型。

import torch.nn as nn

import torch.nn.functional as F

#模型构建

class WordAVGModel(nn.Module):

def __init__(self, vocab_size, embedding_dim, output_dim, pad_idx):

super().__init__()

self.embedding = nn.Embedding(vocab_size, embedding_dim, padding_idx=pad_idx)

self.fc = nn.Linear(embedding_dim, output_dim)

def forward(self, text):

embedded = self.embedding(text) # [sent len, batch size, emb dim]

embedded = embedded.permute(1, 0, 2) # [batch size, sent len, emb dim]

pooled = F.avg_pool2d(embedded, (embedded.shape[1], 1)).squeeze(1) # [batch size, embedding_dim]

return self.fc(pooled)

RNN的作用就是一个encoder,相当于去带了avg_pool2d层。

p

a

s

s

pass

pass

使用最后一个hidden statehT来表示整个句子

最后加一个线性层,预测句子情感。

class RNN(nn.Module):

def __init__(self, vocab_size, embedding_dim, hidden_dim, output_dim,

n_layers, bidirectional, dropout, pad_idx):

super().__init__()

self.embedding = nn.Embedding(vocab_size, embedding_dim, padding_idx=pad_idx)

self.rnn = nn.LSTM(embedding_dim, hidden_dim, num_layers=n_layers,

bidirectional=bidirectional, dropout=dropout)

self.fc = nn.Linear(hidden_dim*2, output_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, text):

embedded = self.dropout(self.embedding(text)) #[sent len, batch size, emb dim]

output, (hidden, cell) = self.rnn(embedded)

#output = [sent len, batch size, hid dim * num directions]

#hidden = [num layers * num directions, batch size, hid dim]

#cell = [num layers * num directions, batch size, hid dim]

#concat the final forward (hidden[-2,:,:]) and backward (hidden[-1,:,:]) hidden layers

#and apply dropout

hidden = self.dropout(torch.cat((hidden[-2,:,:], hidden[-1,:,:]), dim=1)) # [batch size, hid dim * num directions]

return self.fc(hidden.squeeze(0))

class CNN(nn.Module):

def __init__(self, vocab_size, embedding_dim, n_filters,

filter_sizes, output_dim, dropout, pad_idx):

super().__init__()

self.embedding = nn.Embedding(vocab_size, embedding_dim, padding_idx=pad_idx)

self.convs = nn.ModuleList([

nn.Conv2d(in_channels = 1, out_channels = n_filters,

kernel_size = (fs, embedding_dim))

for fs in filter_sizes

])

self.fc = nn.Linear(len(filter_sizes) * n_filters, output_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, text):

text = text.permute(1, 0) # [batch size, sent len]

embedded = self.embedding(text) # [batch size, sent len, emb dim]

embedded = embedded.unsqueeze(1) # [batch size, 1, sent len, emb dim]

conved = [F.relu(conv(embedded)).squeeze(3) for conv in self.convs]

#conv_n = [batch size, n_filters, sent len - filter_sizes[n]]

pooled = [F.max_pool1d(conv, conv.shape[2]).squeeze(2) for conv in conved]

#pooled_n = [batch size, n_filters]

cat = self.dropout(torch.cat(pooled, dim=1))

#cat = [batch size, n_filters * len(filter_sizes)]

return self.fc(cat)

RNN、CNN不同的地方也就是参数,训练方式等,所以不再赘述。

#返回所有requires_grad的参数

def count_parameters(model):

return sum(p.numel() for p in model.parameters() if p.requires_grad)

#模型需要使用的参数

INPUT_DIM = len(TEXT.vocab)

EMBEDDING_DIM = 100 #与glove词向量维度相同

OUTPUT_DIM = 1

PAD_IDX = TEXT.vocab.stoi[TEXT.pad_token]

#实例化一个模型对象

model = WordAVGModel(INPUT_DIM, EMBEDDING_DIM, OUTPUT_DIM, PAD_IDX)

#给模型设置预训练词向量。

pretrained_embeddings = TEXT.vocab.vectors

model.embedding.weight.data.copy_(pretrained_embeddings)

#初始化UNK、PAD的token

UNK_IDX = TEXT.vocab.stoi[TEXT.unk_token]

model.embedding.weight.data[UNK_IDX] = torch.zeros(EMBEDDING_DIM)

model.embedding.weight.data[PAD_IDX] = torch.zeros(EMBEDDING_DIM)

#【查看参数数量】

print(f'The model has {count_parameters(model):,} trainable parameters')

#训练模型,还是那几步,optimizer,计算损失,反向传播,zero_grad()

import torch.optim as optim

#定义optimizer和loss计算方式

optimizer = optim.Adam(model.parameters())

criterion = nn.BCEWithLogitsLoss()

model = model.to(device)

criterion = criterion.to(device)

#计算准确率

def binary_accuracy(preds, y):

"""

Returns accuracy per batch, i.e. if you get 8/10 right, this returns 0.8, NOT 8

"""

#round predictions to the closest integer

rounded_preds = torch.round(torch.sigmoid(preds))

correct = (rounded_preds == y).float() #convert into float for division

acc = correct.sum()/len(correct)

return acc

#训练模型

def train(model, iterator, optimizer, criterion):

epoch_loss = 0

epoch_acc = 0

model.train()

for batch in iterator:

optimizer.zero_grad()

predictions = model(batch.text).squeeze(1)

loss = criterion(predictions, batch.label)

acc = binary_accuracy(predictions, batch.label)

loss.backward()

optimizer.step()

epoch_loss += loss.item()

epoch_acc += acc.item()

return epoch_loss / len(iterator), epoch_acc / len(iterator)

#评价模型

def evaluate(model, iterator, criterion):

epoch_loss = 0

epoch_acc = 0

model.eval()

with torch.no_grad():

for batch in iterator:

predictions = model(batch.text).squeeze(1)

loss = criterion(predictions, batch.label)

acc = binary_accuracy(predictions, batch.label)

epoch_loss += loss.item()

epoch_acc += acc.item()

return epoch_loss / len(iterator), epoch_acc / len(iterator)

#计算epoch_time

import time

def epoch_time(start_time, end_time):

elapsed_time = end_time - start_time

elapsed_mins = int(elapsed_time / 60)

elapsed_secs = int(elapsed_time - (elapsed_mins * 60))

return elapsed_mins, elapsed_secs

N_EPOCHS = 10

best_valid_loss = float('inf')

#正式开始训练

for epoch in range(N_EPOCHS):

start_time = time.time()

train_loss, train_acc = train(model, train_iterator, optimizer, criterion)

valid_loss, valid_acc = evaluate(model, valid_iterator, criterion)

end_time = time.time()

epoch_mins, epoch_secs = epoch_time(start_time, end_time)

if valid_loss < best_valid_loss:

best_valid_loss = valid_loss

torch.save(model.state_dict(), 'wordavg-model.pt')

print(f'Epoch: {epoch+1:02} | Epoch Time: {epoch_mins}m {epoch_secs}s')

print(f'tTrain Loss: {train_loss:.3f} | Train Acc: {train_acc*100:.2f}%')

print(f't Val. Loss: {valid_loss:.3f} | Val. Acc: {valid_acc*100:.2f}%')

#生成预测-输入句子判断情感正负

import spacy

nlp = spacy.load('en')

def predict_sentiment(sentence):

tokenized = [tok.text for tok in nlp.tokenizer(sentence)]

indexed = [TEXT.vocab.stoi[t] for t in tokenized]

tensor = torch.LongTensor(indexed).to(device)

tensor = tensor.unsqueeze(1)

prediction = torch.sigmoid(model(tensor))

return prediction.item()

#进行预测,我尼玛还挺准

predict_sentiment("This film is terrible")

predict_sentiment("This film is great")

如果觉得我的文章对您有用,请随意打赏。你的支持将鼓励我继续创作!